In search of the sexiest map

I spent today working on a Stupid Hackathon idea, shamelessly stolen from Tom Brown, that sadly did not get built at the event itself.

The Google Vision API provides an interface for pushing images through Google’s Safe Search algorithm, which returns the likelihood that the image contains adult, violent, medical, or spoof content. By testing thousands of satellite images, we can find what Google believes to be the sexiest map.

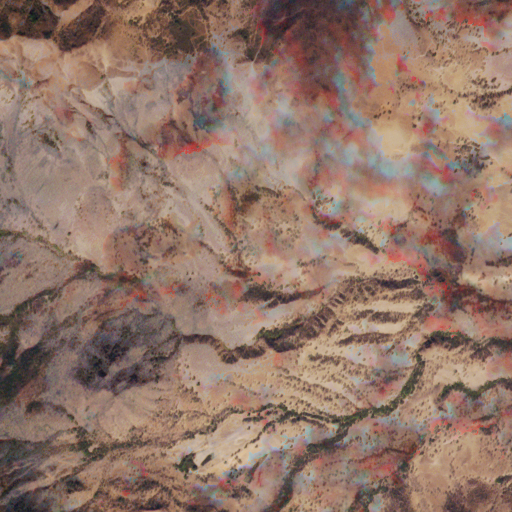

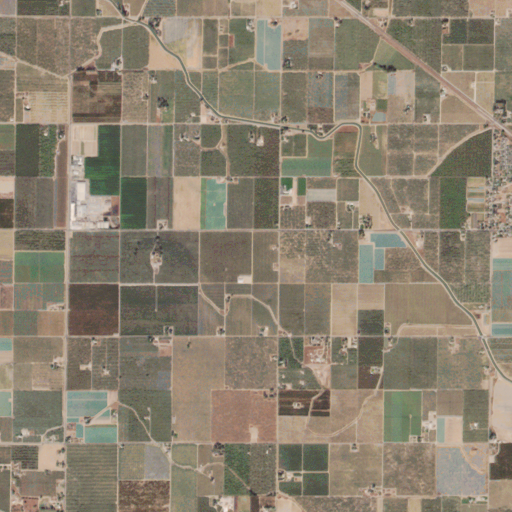

Because Planet Labs requires registration for access to their full Open California data, I had to scrape what I could from their public Google Maps style interface. I wrote a Python script to take 4 tiles from zoom level 14, and combine them into one 512x512 pixel image. By themselves, the images are frequently abstractly beautiful:

I scraped about 5000 of these, before feeding them to the Google Vision API.

Unfortunately, though I had thought that the Google Vision API would return a float likelihood value for each of the categories, it actually abstracts that into several qualitative likelihood values, ranging from “very unlikely” to “very likely.”

Of the 5000 images, Google found two considered “likely” to have adult content, and one considered “likely” to have violent content. Are you ready for the two sexiest satellite images of California?

I don’t really understand either, but whatever floats your boat, Google.

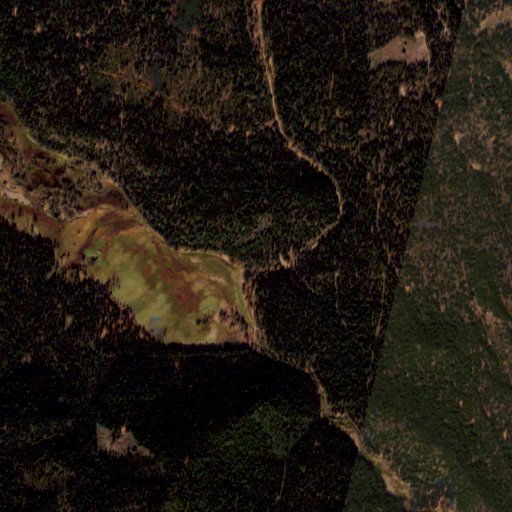

And, the satellite image Google thought most likely to contain violent content:

This one maybe makes more sense, but it’s still pretty hard to imagine.

Google also found several images that “possibly” contained unsafe content: 74 with violence, 27 with medical content, and 9 with adult content.

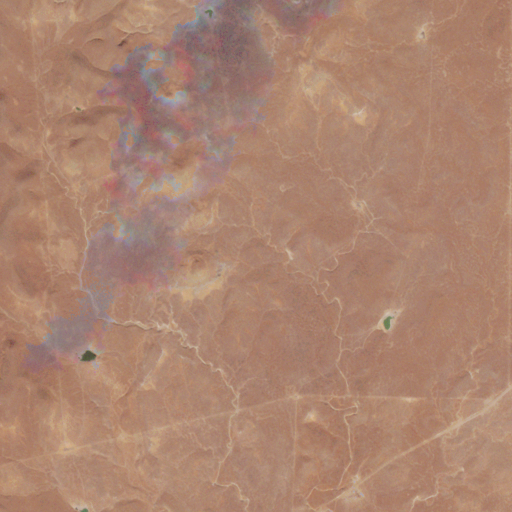

Of these, most of the adult images were also views of rectangular farms and fields, sometimes with rivers snaking through them. I wonder if Google is using something kind of old-school for this, like a connected-element/limb analyzer?

Maybe, if you squint.

The most understandable mistakes were the images that Google categorized as possibly containing medical content. Who can forget the rolling, golden skin of California, with its stretching unpaved wrinkles connecting dry ranches to dusty market towns?

Good job, Google. Good job.

Code for scraping Planet Labs images:

Code for talking with the Google Vision API, based on Google’s Text Detection starter code: